Surviving The Survivorship Bias

You too can be popular.

Here are three articles from respected sources that describe the common traits of innovative companies:

The 10 Things Innovative Companies Do To Stay On Top (Business Insider)

The World’s 10 Most Innovative Companies And How They Do It (Forbes)

Five Ways To Make Your Company More Innovative (Harvard Business School)

The purpose of these articles – as Forbes puts it – is to answer a simple question: “…what makes the difference for successful innovators?” It’s an interesting question and one that I would dearly love to answer clearly.

The implication is that, if your company studies these innovative companies and implements similar practices, well, then … your company will be innovative, too. It’s a nice idea. It’s also completely erroneous.

How do we know the reasoning is erroneous? Because it suffers from the survivorship fallacy. (For a primer on survivorship, click here and here). The companies in these articles are picked because they are viewed as the most innovative or most successful or most progressive or most something. They “survive” the selection process. We study them and abstract out their common practices. We assume that these common practices cause them to be more innovative or successful or whatever.

The fallacy comes from an unbalanced sample. We only study the companies that survive the selection process. There may well be dozens of other companies that use similar practices but don’t get similar results. They do the same things as the innovative companies but they don’t become innovators. Since we only study survivors, we have no basis for comparison. We can’t demonstrate cause and effect. We can’t say how much the common practices actually contribute to innovation. It may be nothing. We just don’t know.

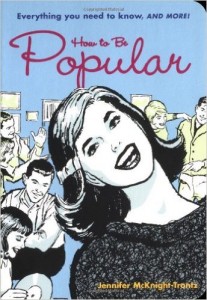

Some years ago, I found a book called How To Be Popular at a used-book store. Written for teenagers, it tells you what the popular kids do. If you do the same things, you too can be popular. It’s cute and fluffy and meaningless. In fact, to someone beyond adolescence, it’s obvious that it’s meaningless. Doing what the popular kids do doesn’t necessarily make your popular. We all know that.

I bought the book as a keepsake and a reminder that the survivorship fallacy can pop up at any moment. It’s obvious when it appears in a fluffy book written for teens. It’s less obvious when it appears in a prestigious business journal. But it’s still a fallacy.

Egotism and Awe

I’m in awe of my ego.

Are egotism and awe inversely related? As one goes up, does the other go down? Could egotism and awe be two ends of the same spectrum?

Let’s start with egotism. Is it going up or down? Are our kids more egotistic than we were at the same age? Are we more self-centered than our parents were?

There’s growing evidence that egotism is on the rise. William Chopik and his colleagues, for instance, researched long-term trends in egotism by analyzing every State of the Union address from 1790 to 2012. They counted the words and analyzed the number that showed self-interest (“me’, “mine” “I”, “our”) compared to the number that showed interest in others (“you”, “your”, “his”, “theirs”). The ratio between the two became the “egocentricity index”.

Up until 1900, other-focused words dominated, outnumbering self-focused words every year. In 1920, however, the trend reversed and self-focused words have outnumbered other-focused words ever since. Though the trend is inexorably upward, there are peaks and valleys. For instance, the index spikes after economic booms and slides during recessions. (The research article is here. A less academic summary is here.)

Why would we grow more egocentric over time? Perhaps the economy accentuates the trend. From 1960 to the present, American GNP per capita has roughly tripled. The egocentricity index hasn’t grown quite so quickly but it has accelerated compared to pre-1960 levels – just as the economy has.

Emily Bianchi’s research lends credence to this thought. Bianchi measured the narcissism of over 32,000 people aged 18 to 83. She found that those who had come of age (aged 18 to 25) during recessions were less likely to be narcissistic later in life compared to those who came of age in boom times. (The research article is here. A less academic summary is here).

A number of other articles (for instance here, here, and here) suggest that egocentrism has increased significantly over the past 40 years or so.

And while egotism has been on the rise, what’s been happening to awe? According to Paul Piff and Dacher Keltner, it’s on the decline. And we should be worried about it.

Piff and Keltner argue that awe is a collective emotion “that motivates people to do things that enhance the greater good.” The authors have done a number of studies (here and here, for instance) that induce awe in research subjects and measure the effects. Those who experienced more awe were also more likely to help strangers, lend a hand after an accident, and share more resources.

Writing in the New York Times, Piff and Keltner suggest that our culture is “awe-deprived”. People spend less time staring at the stars, watching the Northern lights, camping out, or even just visiting art museums. Indeed, when was the last time you got awe-induced goose bumps?

The authors suggest that the overall reduction in awe is linked to a “broad societal shift” over the last 50 years. “People have become more individualistic, more self-focused, more materialistic and less connected to others.”

Did the rise in egocentrism cause the decline in awe? If so, could we reduce egocentrism by increasing awe in our culture? It’s hard to say but it’s certainly worth a try. Let’s head for the hills.

Seldom Right. Never In Doubt.

I’m never wrong. About anything.

Since I began teaching critical thinking four years ago, I’ve bought a lot of books on the subject. The other day, I wondered how many of those books I’ve actually read.

So, I made two piles on the floor. In one pile, I stacked all the books that I have read (some more than once). In the other pile, I stacked the books that I haven’t read.

Guess what? The unread stack is about twice as high as the other stack. In other words, I’ve read about a third of the books I’ve acquired on critical thinking and have yet to read about two-thirds.

What can I conclude from this? My first thought: I need to take a vacation and do a lot of reading. My second thought: Maybe I shouldn’t mention this to the Dean.

I also wondered, how much do I not know? Do I really know only a third of what there is to know about the topic? Maybe I know more since there’s bound to be some repetition in the books. Or maybe I know less since my modest collection may not cover the entire topic. Hmmm…

The point is that I’m thinking about what I don’t know rather than what I do know. That instills in me a certain amount of doubt. When I make assertions about critical thinking, I add cautionary words like perhaps or maybe or the evidence suggests. I leave myself room to change my position as new knowledge emerges (or as I acquire knowledge that’s new to me).

I suspect that the world might be better off if we all spent more time thinking about what we don’t know. And it’s not just me. The Dunning-Kruger effect states essentially the same thing.

David Dunning and Justin Kruger, both at Cornell, study cognitive biases. In their studies, they documented a bias that we now call illusory superiority. Simply put, we overestimate our own abilities and skills compared to others. More specifically, the less we know about a given topic, the more likely we are to overestimate our abilities. In other words, the less we know, the more confident we are in our opinions. As David Dunning succinctly puts it, “…incompetent people do not recognize—scratch that, cannot recognize—just how incompetent they are.”

The opposite seems to be true as well. Highly competent people tend to underestimate their competence relative to others. The thinking goes like this: If it’s easy for me, it must be easy for others as well. I’m not so special.

I’ve found that I can use the Dunning-Kruger effect as a rough-and-ready test of credibility. If a source provides a small amount of information with a high degree of confidence, then their credibility declines in my estimation. On the other hand, if the source provides a lot of information with some degree of doubt, then their credibility rises. It’s the difference between recognizing a wise person and a fool.

Perhaps we can use the same concept to greater effect in our teaching. When we learn about a topic, we implicitly learn about what we don’t know. Maybe we should make it more explicit. Maybe we should count the books we’ve read and the books we haven’t read to make it very clear just how much we don’t know. If we were less certain of our opinions, we would be more open to other people and intriguing new ideas. That can’t be a bad thing.

The Doctor Won’t See You Now

Shouldn’t you be at a meeting?

If you were to have major heart problem – acute myocardial infarction, heart failure, or cardiac arrest — which of the following conditions would you prefer?

Scenario A — the failure occurs during the heavily attended annual meeting of the American Heart Association when thousands of cardiologists are away from their offices or;

Scenario B — the failure occurs during a time when there are no national cardiology meetings and fewer cardiologists are away from their offices.

If you’re like me, you’ll probably pick Scenario B. If I go into cardiac arrest, I’d like to know that the best cardiologists are available nearby. If they’re off gallivanting at some meeting, they’re useless to me.

But we might be wrong. According to a study published in JAMA Internal Medicine (December 22, 2014), outcomes are generally better under Scenario A.

The study, led by Anupam B. Jena, looked at some 208,000 heart incidents that required hospitalization from 2002 to 2011. Of these, slightly more than 29,000 patients were hospitalized during national meetings. Almost 179,000 patients were hospitalized during times when no national meetings were in session.

And how did they fare? The study asked two key questions: 1) how many of these patients died within 30 days of the incident? and; 2) were there differences between the two groups? Here are the results:

- Heart failure – statistically significant differences – 17.5% of heart failure patients in Scenario A died within 30 days versus 24.8% in Scenario B. The probability of this happening by chance is less than 0.1%.

- Cardiac arrest — statistically significant differences – 59.1% of cardiac arrest patients in Scenario A died within 30 days versus 69.4% in Scenario B. The probability of this happening by chance is less than 1.0%.

- Acute myocardial infarction – no statistically significant differences between the two groups. (There were differences but they may have been caused by chance).

The general conclusion: “High-risk patients with heart failure and cardiac arrest hospitalized in teaching hospitals had lower 30-day mortality when admitted during dates of national cardiology meetings.”

It’s an interesting study but how do we interpret it? Here are a few observations:

- It’s not an experiment – we can only demonstrate cause-and-effect using an experimental method with random assignment. But that’s impossible in this case. The study certainly demonstrates a correlation but doesn’t tell us what caused what. We can make educated guesses, of course, but we have to remember that we’re guessing.

- The differences are fairly small – we often misinterpret the meaning of “statistically significant”. It sounds like we found big differences between A and B; the differences, after all, are “significant”. But the term refers to probability not the degree of difference. In this case, we’re 99.9% sure that the differences in the heart failure groups were not caused by chance. Similarly, we’re 99% sure that the differences in the cardiac arrest groups were not caused by chance. But the differences themselves were fairly small.

- The best guess is overtreatment – what causes these differences? The best guess seems to be that cardiologists – when they’re not off at some meeting – are “overly aggressive” in their treatments. The New York Times quotes Anupam Jena: “…we should not assume … that more is better. That may not be the case.” Remember, however, that this is just a guess. We haven’t proven that overtreatment is the culprit.

It’s a good study with interesting findings. But what should we do about them? Should cardiologists change their behavior based on this study? Translating a study’s findings into policies and protocols is a big jump. We’re moving from the scientific to the political. We need a heavy dose of critical thinking. What would you do?

Are You The Boss Of You?

I am the master of my fate. Aren’t I?

Like so many teenagers, I once believed that “I am the master of my fate, I am the captain of my soul.” I could take control, think for myself, and guide my own destiny.

It’s a wonderful thought and I really want to believe it’s true. But I keep finding more and more hidden persuaders that manipulate our thinking in unseen ways. In some cases, we manipulate ourselves by mis-framing a situation. In other cases, other people do the work for us.

Consider these situations and ask yourself: Are you the boss of you?

- When you eat potato chips, are you thinking for yourself? Or is some canny food scientist manipulating you by steering you towards your bliss point?

- When you gamble in a casino, are you in control or is the environment influencing you to spend a little more and stay a little longer?

- When you eat chocolate, is it because you love the taste or because the microbes in your gut are controlling your behavior?

- When you play the slot machines, are you deciding how much to spend or is a sophisticated algorithm dispensing just enough winnings to keep you hooked? Are you being addicted in the machine zone?

- When you read social media, do you decide how long to stay on or does a slow, steady drip of dopamine keep you engaged?

- When you don’t eat fish for 20 years, is it because you’re allergic or did you just never think to test your own assumptions? Did you frame yourself?

- When you go rock climbing, is it because you’re an adventurous spirit or because your slow heart beat tilts your life towards cheap thrills (and perhaps a life of crime)?

- When you chat with people in your neighborhood, do they express a wide range of opinions or do they all think more or less like you? When you surf the Internet, do you read only items that you agree with? Will that make you crazy?

- When you vote for a political candidate, is it because you have carefully considered all the issues and chosen the best candidate or because a cynical communications expert has got your goat with attributed belittlement?

- When you vote for stronger anti-crime laws, is it because you think they’ll actually work or are you succumbing to the vividness availability bias? (Vivid images of spectacular crimes are readily available to your memory so you vastly over-estimate their frequency).

- Why are so many restaurants painted red? Why is the woman in the picture (above) wearing a red outfit?

- Is the future ahead of you or behind you? Why did you make that choice?

- When you make a big decision for your company, you never let your biases get in the way, do you? Yet when other people make big decisions, they’re biased most of the time, aren’t they?

- When you choose a mate, are you thinking clearly or is sociobiology influencing your behavior?

- Did you carefully consider and consciously choose those activities that arouse you sexually or was the decision made for you by some combination of hormones, psychology, and genetics?

- Is your primary responsibility as an inhabitant of a given country to be a good citizen or a good consumer?

- When you buy something is it because you need it or because you want it? Perhaps you’re being manipulated by Sigmund Freud’s nephew, Edward Bernays, the founder of public relations. Or perhaps you’ve been brandwashed.

In The Century of the Self, a British video documentary, Adam Curtis argues that we were hopelessly manipulated in the 20th century by slick followers of Freud who invented public relations. Of course, video is our most emotional and least logical medium. So perhaps Curtis is manipulating us to believe that we’ve been manipulated. It’s food for thought.

(The Century of the Self consists of four one-hour documentaries produced for the BBC. You can watch the first one, Happiness Machines, by clicking here).