Three Decision Philosophies

I’ll use the rational, logical approach for this one.

In my critical thinking classes, students get a good dose of heuristics and biases and how they affect the quality of our decisions. Daniel Kahneman and Amos Tversky popularized the notion that we should look at how people actually make decisions as opposed to how they should make decisions if they were perfectly rational.

Most of our decision-making heuristics (or rules of thumb) work most of the time but when they go wrong, they do so in predictable and consistent ways. For instance, we’re not naturally good at judging risk. We tend to overestimate the risk of vividly scary events and underestimate the risk of humdrum, everyday problems. If we’re aware of these biases, we can account for them in our thinking and, perhaps, correct them.

Finding that our economic decisions are often irrational rather than rational has created a whole new field, generally known as behavioral economics. The field ties together concepts as diverse as the availability bias, the endowment effect, the confirmation bias, overconfidence, and hedonic adaptation to explain how people actually make decisions. Though it’s called economics, the basis is psychology.

So does this mean that traditional, rational, statistical, academic decision-making is dead? Well, not so fast. According Justin Fox’s article in a recent issue of Harvard Business Review, there are at least three philosophies of decision-making and each has its place.

Fox acknowledges that, “The Kahneman-Tversky heuristics-and-biases approach has the upper hand right now, both in academia and in the public mind.” But that doesn’t mean that it’s the only game in town.

The traditional, rational, tree-structured logic of formal decision analysis hasn’t gone away. Created by Ronald Howard, Howard Raiffa, and Ward Edwards, Fox argues that the classic approach is best suited to making “Big decisions with long investment horizons and reliable data [as in] oil, gas, and pharma.” Fox notes that Chevron is a major practitioner of the art and that Nate Silver, famous for accurately predicting the elections of 2012, was using a Bayesian variant of the basic approach.

And what about non-rational heuristics that actually do work well? Let’s say, for instance, that you want to rationally allocate your retirement savings across N different investment options. Investing evenly in each of the N funds is typically just as good as any other approach. Know as the 1/N approach, it’s a simple heuristic that leads to good results. Similarly, in choosing between two options, selecting the one you’re more familiar with usually creates results that are no worse than any other approach – and does so more quickly and at much lower cost.

Fox calls this the “effective heuristics” approach or, more simply, the gut-feel approach. Fox suggests that this is most effective, “In predictable situations with opportunities for learning, [such as] firefighting, flying, and sports.” When you have plenty of practice in a predictable situation, your intuition can serve you well. In fact, I’d suggest that the famous (or infamous) interception at the goal line in this year’s Super Bowl resulted from exactly this kind of thinking.

And where does the heuristics-and-biases model fit best? According to Fox, it helps us to “Design better institutions, warn ourselves away from dumb mistakes, and better understand the priorities of others.”

So, we have three philosophies of decision-making and each has its place in the sun. I like the heuristics-and-biases approach because I like to understand how people actually behave. Having read Fox, though, I’ll be sure to add more on the other two philosophies in upcoming classes.

Do Smartphones Make Us Smarter, Dumber, Or Happier?

So which is it?

Smartphones:

- Make you lazy and dumb.

- Make the world more intelligent by adding massive amounts of new processing power.

- Both of the above.

- None of the above.

Smartphones have an incredible impact on how we live and communicate. They also illustrate a popular technology maxim: If it can be done, it will be done. In other words, they’re not going away. They’ll grow smaller and stronger and will burrow into our lives in surprising ways. The basic question: are they making humans better or worse?

Smarter or dumber?

Researchers at the University of Waterloo in Canada recently published a paper suggesting that smartphones “supplant thinking”. The researchers suggest that humans are cognitive misers — we conserve our cognitive resources whenever possible. We let other people – or devices – do our thinking for us. We make maximum use of our extended mind. Why use up your brainpower when your extended mind – beyond your brain – can do it for you? (The original Waterloo paper is here. Less technical summaries are here and here).

Though the researchers don’t use Daniel Kahneman’s terminology, there is an interesting correlation to System 1 and System 2. They write that, “…those who think more intuitively and less analytically [i.e. System 1] when given reasoning problems were more likely to rely on their Smartphones (i.e., extended mind) ….” In other words, System 1 thinkers are more likely to offload.

So, we use our phones to offload some of our processing. Is that so bad? We’ve always offloaded work to machines. Thinking is a form of work. Why not offload it and (potentially) reduce our cognitive load and increase our cognitive reserve? We could produce more interesting thoughts if we weren’t tied down with the scut work, couldn’t we?

Clay Shirky was writing in a different context but that’s the essence of his concept of cognitive surplus. Shirky argues that people are increasingly using their free time to produce ideas rather than simply to consume ideas. We’re watching TV less and simultaneously producing more content on the web. Indeed, this website is an example of Shirky’s concept. I produce the website in my spare time. I have more spare time because I’ve offloaded some of my thinking to my extended mind. (Shirky’s book is here).

Shirky assumes that creating is better than consuming. That’s certainly a culturally nuanced assumption, but it’s one that I happen to agree with. If it’s true, we should work to increase the intelligence of the devices that surround us. We can offload more menial tasks and think more creatively and collaboratively. That will help us invent more intelligent devices and expand our extended mind. It’s a virtuous circle.

But will we really think more effectively by offloading work to our extended mind? Or, will we forevermore watch reruns of The Simpsons?

I’m not sure which way we’ll go, but here’s how I’m using my smartphone to improve my life. Like many people, I consult my phone almost compulsively. I’ve taught myself to smile for at least ten seconds each time I do. My phone reminds me to smile. I’m not sure if that’s leading me to higher thinking or not. But it certainly brightens my mood.

Thinking About Thinking

Thinking about my thinking.

Let’s say your sweetie is feeling anxious or stressed or blue or just plain cranky. Would you help her?

Of course, you would. You might start by asking simple, straightforward questions, like: What’s going on? Why are you feeling down? How can I help? Simple, direct questions are effective because they’re thought provoking. They can cover a lot of mental territory. Ambiguous questions help as well. They allow your sweetie to frame her response based on her needs, not yours.

Now, let’s change the frame. If you were feeling anxious or stressed or blue or just plain cranky, would you ask yourself the same questions? I’ve asked this of many people and the most common response seems to be: I don’t think I would think of doing that.

The trick here seems to be the ability to convert a monologue into a dialogue. We all have a little narrator in our heads who comments on what’s going on around us. I call mine the play-by-play announcer because he (she? it?) serves the same function as a sports announcer – narrating the action.

When I watch a sporting event on TV, I just want the narrator to explain what’s going on and why. I want the same of my internal narrator. I don’t normally question the sports narrator; I just go with the flow. I do the same with my internal narrator.

The narrator – whether sports or internal – is in a monologue. It takes an act of imagination to question the narrator. When I’m speaking to my sweetie, it’s natural and obvious to create a dialogue. When I’m speaking to myself, it’s not at all obvious. I don’t naturally think about my thinking.

I’m trying to change that. I’m trying to teach myself a new trick. When I notice certain cues, I ask myself simple, direct questions to better understand the experience. What are the cues? There are at least three clusters:

Cue 1 — when I’m feeling anxious or stressed or blue or just plain cranky. I’ve learned to take note of this condition and use it as a prompt to ask a simple question: Why am I feeling this way? This helps me bring my feelings and desires to a conscious level and sort them out logically. In Daniel Kahneman’s terminology, I’m using my System 2 to check on my System 1.

Cue 2 – when I’m feeling really good, energetic, or enthusiastic. I’d like to feel this way more often. So, when I’m in a great mood, I prompt myself to ask: How did this happen? I’ve discovered some interesting correlations – not all of which I’m going to share. The best correlation may be obvious: Suellen is often around.

Cue 3 – when I have a good idea. I like having good ideas. I feel productive, creative, and smart. So, when I have a good idea, I prompt myself to ask: What was I doing when this idea popped into my head? Again, I’ve discovered some interesting correlations. Most frequently, I’m moving rather than sitting still. I don’t know why that is but I know it works.

I could probably apply the same introspection to other cues as well. At the moment however, I’m just trying to master the trick under these three conditions. What about you? When do you think about your thinking?

Ebola and Availability Cascades

We can’t see it so it must be everywhere!

Which causes more deaths: strokes or accidents?

The way you consider this question speaks volumes about how humans think. When we don’t have data at our fingertips (i.e., most of the time), we make estimates. We do so by answering a question – but not the question we’re asked. Instead, we answer an easier question.

In fact, we make it personal and ask a question like this:

How easy is it for me to retrieve memories of people who died of strokes compared to memories of people who died by accidents?

Our logic is simple: if it’s easy to remember, there must be a lot of it. If it’s hard to remember, there must be less of it.

So, most people say that accidents cause more deaths than strokes. Actually, that’s dead wrong. As Daniel Kahneman points out, strokes cause twice as many deaths as all accidents combined.

Why would we guess wrong? Because accidents are more memorable than strokes. If you read this morning’s paper, you probably read about several accidental deaths. Can you recall reading about any deaths by stroke? Even if you read all the obituaries, it’s unlikely.

This is typically known as the availability bias – the memories are easily available to you. You can retrieve them easily and, therefore, you overestimate their frequency. Thus, we overestimate the frequency of violent crime, terrorist attacks, and government stupidity. We read about these things regularly so we assume that they’re common, everyday occurrences.

We all suffer from the availability bias. But when we suffer from it simultaneously and together, it can become an availability cascade – a form of mass hysteria. Here’s how it works. (Timur Kuran and Cass Sunstein coined the term availability cascade. I’m using Daniel Kahneman’s summary).

As Kahneman writes, an “… availability cascade is a self-sustaining chain of events, which may start from media reports of a relatively minor incident and lead up to public panic and large-scale government action.” Something goes wrong and the media reports it. It’s not an isolated incident; it could happen again. Perhaps it could affect a lot of people. Perhaps it’s an invisible killer whose effects are not evident for years. Perhaps you already have it. How would one know? Or perhaps it’s a gruesome killer that causes great suffering. Perhaps it’s not clear how one gets it. How can we protect ourselves?

Initially, the story is about the incident. But then it morphs into a meta-story. It’s about angry people who are demanding action; they’re marching in the streets and protesting in front of the White House. It’s about fear and loathing. Then experts get involved. But, of course, multiple experts never agree on anything. There are discrepancies in the stories they tell. Perhaps they don’t know what’s really going on. Perhaps they’re hiding something. Perhaps it’s a conspiracy. Perhaps we’re all going to die.

A story like this can spin out of control in a hurry. It goes viral. Since we hear about it every day, it’s easily available to our memories. Since it’s available, we assume that it’s very probable. As Kahneman points out, “…the response of the political system is guided by the intensity of public sentiment.”

Think it can’t happen in our age of instant communications? Go back and read the stories about ebola in America. It’s a classic availability cascade. Chris Christie, the governor of New Jersey, reacted quickly — not because he needed to but because of the intensity of public sentiment. Our 24-hour news cycle needs something awful to happen at least once a day. So availability cascades aren’t going to go away. They’ll just happen faster.

Now You See It, But You Don’t

What don’t you see?

The problem with seeing is that you only see what you see. We may see something and try to make reasonable deductions from it. We assume that what we see is all there is. All too often, the assumption is completely erroneous. We wind up making decisions based on partial evidence. Our conclusions are wrong and, very often, consistently biased. We make the same mistake in the same way consistently over time.

As Daniel Kahneman has taught us: what you see isn’t all there is. We’ve seen one of his examples in the story of Steve. Kahneman present this description:

Steve is very shy and withdrawn, invariably helpful but with little interest in people or in the world of reality. A meek and tidy soul, he has a need for order and structure, and a passion for detail.

Kahneman then asks if it’s more likely that Steve is a farmer or a librarian?

If you read only what’s presented to you, you’ll most likely guess wrong. Kahneman wrote the description to fit our stereotype of a male librarian. But male farmers outnumber male librarians by a ratio of about 20:1. Statistically, it’s much more likely that Steve is a farmer. If you knew the base rate, you would guess Steve is a farmer.

We saw a similar example with World War II bombers. Allied bombers returned to base bearing any number of bullet holes. To determine where to place protective armor, analysts mapped out the bullet holes. The key question: which sections of the bomber were most likely to be struck? Those are probably good places to put the armor.

But the analysts only saw planes that survived. They didn’t see the planes that didn’t make it home. If they made their decision based only on the planes they saw, they would place the armor in spots where non-lethal hits occurred. Fortunately, they realized that certain spots were under-represented in their bullet hole inventory – spots around the engines. Bombers that were hit in the engines often didn’t make it home and, thus, weren’t available to see. By understanding what they didn’t see, analysts made the right choices.

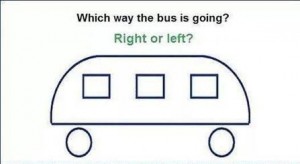

I like both of these examples but they’re somewhat abstract and removed from our day-to-day experience. So, how about a quick test of our abilities? In the illustration above, which way is the bus going?

Study the image for a while. I’ll post the answer soon.