Computers Are Useless. They Only Give You Answers.

I’ve worked with some highly creative people during my career. I’ve also worked with very insightful thinkers, both in business and in academia. Oftentimes, the two skills overlap: creative people are also insightful thinkers and vice-versa. I’ve often wondered if creativity leads to insight or if insight leads to creativity. Lately, I’ve been thinking that there’s a third factor that produces both — the ability to ask useful questions.

I’ve worked with some highly creative people during my career. I’ve also worked with very insightful thinkers, both in business and in academia. Oftentimes, the two skills overlap: creative people are also insightful thinkers and vice-versa. I’ve often wondered if creativity leads to insight or if insight leads to creativity. Lately, I’ve been thinking that there’s a third factor that produces both — the ability to ask useful questions.

Indeed, the title of today’s post is a quote from Pablo Picasso, who seemed both creative and insightful. His point — computers don’t help you ask questions … and questions are much more valuable than answers.

So, how do you ask good questions? Here are some tips from my experience augmented with suggestions by Shane Snow, Gary Lockwood, Penelope Trunk, and Peter Wood.

It’s not about you — too often, we ask long-winded questions designed to show our own knowledge and erudition. The point of asking a question is to gather information and insight. Be brief and don’t lead the witness.

You can contribute to a better answer — even if you ask a great question, you may not get a great answer. The response may wander both in time and logic, looping forward and backward. You can help the respondent by asking brief, clarifying questions. Don’t worry too much about interrupting; your respondent will likely appreciate your help.

Remember your who, what, where, when, how … and sometimes why — these words introduce open-ended questions that often result in more information and deeper insights. Be careful with why. Your respondent may become defensive.

Don’t go too narrow too soon — decision theory has a concept called premature commitment. We see a potential solution and start to pursue it while ignoring equally valid alternatives. It can happen in your questions as well. Start with broad questions to uncover all the alternatives. Then decide which one(s) to pursue.

Dumb questions are often the best — asking an (open-ended) question whose answer may seem obvious often uncovers unexpected insights. Even if you’re well versed in a subject, don’t assume you know the answer from the respondent’s perspective. He or she may have insights you know nothing about.

Be aware of your ambiguities — even simple, seemingly straightforward questions can be ambiguous. Your respondent may answer one question when you intended another. Here’s a simple example: what’s the tallest mountain in the world? There are two “correct” answers: Mt. Everest (if you measure from sea level) or Chimborazo (if you measure from the center of the earth). Which question is your respondent answering?

Think of parallel questions — I’m reading a Kinsey Millhone detective novel (U is for Undertow). One of the important questions Kinsey asks herself is, “why were the teenage boys burying a dog?” It gets her nowhere. But a slight tweak to the question — “Why were the boys burying a dog there?” — provides the insight that solves the mystery. (Reading detective novels is a good way to learn questioning techniques).

Clarify your terms — my sister is an entomologist. She knows that there’s a difference between a bug and an insect. I use the terms more or less interchangeably. If I ask her a question about bugs, she’ll answer it in the technical sense even though I mean it in the colloquial sense. We’re using the same word with two different meanings. It’s a good idea to ask, “When you talk about bugs, what do you mean?”

Think about how you answer questions — when you respond to questions, observe which ones are annoying and which ones lead to interesting insights. Stockpile the interesting ones for your own use.

Silence is golden — when speaking on the radio, I might say “over” to indicate that I’m finished speaking and it’s your turn. In normal conversation, we use body language and tone-of-voice to make the same transfer. Breaking the expected etiquette can lead to interesting insights. You ask a question. The respondent answers and turns it back to you. You remain silent. There’s an awkward pause and, often, the respondent continues the answer … in a less rehearsed and less controlled manner. Interesting tidbits may just spill out.

Don’t be too clever — Peter Wood probably says it best, “A few people have a gift for witty, memorable questions. You probably aren’t one of them. It doesn’t matter. A concise, clear question is an important contribution in its own right”.

A Joke About Your Mind’s Eye

Here’s a cute little joke:

The receptionist at the doctor’s office goes running down the hallway and says, “Doctor, Doctor, there’s an invisible man in the waiting room.” The Doctor considers this information for a moment, pauses, and then says, “Tell him I can’t see him”.

It’s a cute play on a situation we’ve all faced at one time or another. We need to see a doctor but we don’t have an appointment and the doc just has no time to see us. We know how it feels. That’s part of the reason the joke is funny.

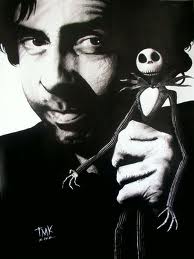

Now let’s talk about the movie playing in your head. Whenever we hear or read a story, we create a little movie in our heads to illustrate it. This is one of the reasons I like to read novels — I get to invent the pictures. I “know” what the scene should look like. When I read a line of dialogue, I imagine how the character would “sell” the line. The novel’s descriptions stimulate my internal movie-making machinery. (I often wonder what the interior movies of movie directors look like. Do Tim Burton’s internal movies look like his external movies? Wow.)

We create our internal movies without much thought. They’re good examples of our System 1 at work. The pictures arise based on our experiences and habits. We don’t inspect them for accuracy — that would be a System 2 task. (For more on our two thinking systems, click here). Though we don’t think much about the pictures, we may take action on them. If our pictures are inaccurate, our decisions are likely to be erroneous. Our internal movies could get us in trouble.

Consider the joke … and be honest. In the movie in your head, did you see the receptionist as a woman and the doctor as a man? Now go back and re-read the joke. I was careful not to give any gender clues. If you saw the receptionist as a woman and the doctor as a man (or vice-versa), it’s because of what you believe, not because of what I said. You’re reading into the situation and your interpretation may just be erroneous. Yet again, your System 1 is leading you astray.

What does this have to do with business? I’m convinced that many of our disagreements and misunderstandings in the business world stem from our pictures. Your pictures are different from mine. Diversity in an organization promotes innovation. But it also promotes what we might call “differential picture syndrome”.

So what to do? Simple. Ask people about the pictures in their heads. When you hear the term strategic reorganization, what pictures do you see in your mind’s eye? When you hear team-building exercise, what movie plays in your head? It’s a fairly simple and effective way to understand our conceptual differences and find common definitions for the terms we use. It’s simple. Just go to the movies together.

Systems Thinking versus Design Thinking

When I was in graduate school, I got a heavy dose of systems thinking. The basic idea is to take a problem, break it apart, and build it up. Let’s say I’m building a house. The house clearly is a system unto itself but we can also break it into subsystems — like plumbing. Plumbing is a logically coherent system with specified inputs and outputs. We can further deconstruct plumbing into more specific subsystems, like sewage versus potable water. As we deconstruct systems into subsystems, we look for linkages. How does one subsystem contribute to another? How do they build on each other?

We can also build upward into larger systems. The house, for instance, is part of a neighborhood which, in turn, is part of a city. The neighborhood also has wastewater systems and electrical systems that the house needs to connect to. If I want to get my mail delivered, it also needs an address — part of a much larger system of geographic designators.

It turns out that systems thinking is a pretty good way to build computer programs. A subroutine that calculates your sales tax, for instance, has specified inputs and outputs. It’s not logically different from a plumbing system. In either case, we start with a problem, break it down, build it up, and find a solution that fits with other systems. Note that we start with a problem and end with a solution.

When Elliot went to architecture school, he got a heavy dose of design thinking. He’s now light years ahead of me. (Isn’t it great when your kid can teach you stuff?) I still find design thinking challenging. I think that’s because I was so heavily invested in systems thinking. Frankly, I didn’t realize how much systems thinking influenced my perspective. It’s like culture. You don’t recognize the deep influence of your own culture until you visit another culture and make comparisons. As I learned design thinking, I realized that there is a whole different way of seeing the world.

The trick with design thinking is that you begin with the solution and work your way backward to the problem. What a concept! Here’s what Wikipedia says:

“…the design way of problem solving starts with the solution in order to start to define enough of the parameters to optimize the path to the goal. The solution, then, is actually the starting point.”

And here’s what John Christopher Jones says in his classic book, Designing Designing:

“The main point of difference is that of timing. Both artists and scientists operate on the physical world as it exists in the present …Designers, on the other hand, are forever bound to treat as real that which exists only in an imagined future and have to specify ways in which the foreseen thing can be made to exist.”

Why would a business person be interested in design thinking? After all, most B-schools (and computer science programs) teach systems thinking. Unless you’re an architect, isn’t that enough? Well…. I’ve noticed that a lot of leading business thinkers now include designers on their teams. In yesterday’s post, I mentioned that A.G. Lafley of Procter & Gamble had designers (from IDEO) in his coterie of advisors. Similarly, was Steve Jobs more of a business genius or a design genius? Design thinkers give us a different way of looking at the world. Maybe we should take them more seriously in business.

Critical Thinking Through the Ages

As I’m teaching a course on critical thinking, I thought it would be useful to study the history of the concept. What have leading thinkers of the past conceived to be “critical thinking” and how have their perceptions changed over time?

As I’m teaching a course on critical thinking, I thought it would be useful to study the history of the concept. What have leading thinkers of the past conceived to be “critical thinking” and how have their perceptions changed over time?

One of the earliest — and most interesting –references that I’ve found is a sermon called the Kalama Sutta preached by the Buddha some five centuries before Christ. Often known as the “charter of free inquiry”, it lays out general tenets for discerning what is true.

Many religions hold that truth is revealed through scriptures or through institutions that are authorized to interpret scriptures. By contrast, Buddhism generally asserts that we have to ascertain truth for ourselves. So, how do we do that?

That was essentially the question that the Kalama people asked the Buddha when he passed through their village of Kesaputta. The Buddha’s sermon emphasizes the need to question statements asserted to be true. Further, the Buddha goes on to list multiple sources of error and cautions us to carefully examine assertions from those sources. According to Wikipedia, the Buddha identified the following sources of error:

- Oral histories

- Tradition

- New sources

- Scripture or other official documents

- Supposition

- Dogmatism

- Common sense

- Opinion

- Experts

- Authorities or one’s own teacher

Further, “Do not accept any doctrine from reverence, but first try it as gold is tried by fire.” The requires examination, reflection, and questioning and only that which is “conducive to the good” should be accepted as truth.

As Thanissaro Bhikkhu summarizes it, “any view or belief must be tested by the results it yields when put into practice; and — to guard against the possibility of any bias or limitations in one’s understanding of those results — they must further be checked against the experience of people who are wise.”

So how do the Buddhist commentaries compare to other philosophers? In the century after Buddha, Socrates is quoted as saying, “I know you won’t believe me, but the highest form of Human Excellence is to question oneself and others.” Almost 2,000 years later, Francis Bacon wrote, “Critical thinking is a desire to seek, patience to doubt, fondness to meditate, slowness to assert, readiness to consider, carefulness to dispose and set in order; and hatred for every kind of imposture.” A few hundred years later, Descartes wrote, “If you would be a real seeker after truth, it is necessary that at least once in your life you doubt, as far as possible, all things.” A hundred years after that, Voltaire wrote about the consequences of a failure of critical thinking, “Anyone who has the power to make you believe absurdities has the power to make you commit injustices.”

As the Swedes would say, there seems to be a “bright red thread” that ties all of these together. Go slowly. Ask questions. Be patient. Doubt your sources. Consider your own experience. Judge the evidence thoughtfully. For well over 2,000 years our philosophers — both Eastern and Western — have been saying essentially the same thing. It seems that we know what to do. Now all we have to do is to do it.

Librarian, Farmer, Debacle

In his book, Thinking Fast and Slow, Daniel Kahneman has an interesting example of a heuristic bias. Read the description, then answer the question.

Steve is very shy and withdrawn, invariably helpful but with little interest in people or in the world of reality. A meek and tidy soul, he has a need for order and structure, and a passion for detail.

Is Steve more likely to be a librarian or a farmer?

I used this example in my critical thinking class the other night. About two-thirds of the students guessed that Steve is a librarian; one-third said he’s a farmer. As we debated Steve’s profession, the class focused exclusively on the information in the simple description.

Kahneman’s example illustrates two problems with the rules of thumb (heuristics) that are often associated with our System 1 thinking. The first is simply stereotyping. The description fits our widely held stereotype of male librarians. It’s easy to conclude that Steve fits the stereotype. Therefore, he must be a librarian.

The second problem is more subtle — what evidence do we use to draw a conclusion? In the class, no one asked for additional information. (This is partially because I encouraged them to reach a decision quickly. They did what their teacher asked them to do. Not always a good idea.) Rather they used the information that was available. This is often known as the availability bias — we make a decision based on the information that’s readily available to us. As it happens, male farmers in the United States outnumber male librarians by a ratio of about 20 to 1. If my students had asked about this, they might have concluded that Steve is probably a farmer — statistically at least.

The availability bias can get you into big trouble in business. To illustrate, I’ll draw on an example (somewhat paraphrased) from Paul Nutt’s book, Why Decisions Fail.

Peca Products is locked in a fierce competitive battle with its archrival, Frangro Enterprises. Peca has lost 4% market share over the past three quarters. Frangro has added 4% in the same period. A board member at Peca — a seasoned and respected business veteran — grows alarmed and concludes that Peca has a quality problem. She sends memos to the executive team saying, “We have to solve our quality problem and we have to do it now!” The executive team starts chasing down the quality issues.

The Peca Products executive team is falling into the availability trap. Because someone who is known to be smart and savvy and experienced says the company has a quality problem, the executives believe that the company has a quality problem. But what if it’s a customer service problem? Or a logistics problem? Peca’s executives may well be solving exactly the wrong problem. No one stopped to ask for additional information. Rather, they relied on the available information. After all, it came from a trusted source.

So, what to do? The first thing to remember in making any significant decision is to ask questions. It’s not enough to ask questions about the information you have. You also need to seek out additional information. Questioning also allows you to challenge a superior in a politically acceptable manner. Rather than saying “you’re wrong!” (and maybe getting fired), you can ask, “Why do you think that? What leads you to believe that we have a quality problem?” Proverbs says that “a gentle answer turneth away wrath”. So does an insightful question.