An Evolutionary View of Innovation

My sister has a Ph.D. in biology. For her dissertation, she randomly divided fruit flies into two groups and treated them exactly the same except for one variable. She introduced a specific chemical to one group but not the other. Then she followed the effects through multiple generations. I don’t remember what she discovered but her method allowed her to conclusively link cause to effect.

My sister has a Ph.D. in biology. For her dissertation, she randomly divided fruit flies into two groups and treated them exactly the same except for one variable. She introduced a specific chemical to one group but not the other. Then she followed the effects through multiple generations. I don’t remember what she discovered but her method allowed her to conclusively link cause to effect.

Why did she choose fruit flies? Because she wanted to look at the effects of the chemical over multiple generations and fruit flies create generations quickly. She wanted to know not just how the chemical affected fruit flies. She wanted to know how the chemical affected the evolution of fruit flies.

Could we use evolutionary thinking to solve business problems in innovative ways? Well, there’s a theory that we could develop software more quickly and at less expense through evolutionary techniques.

First we identify a problem that we want software to solve. Then we create, say 10,000 identical sets of code. We introduce random variations into each set, execute the code, and then determine which set comes closest to solving the problem. We take the winner, make 10,000 copies, introduce random variations into each one, then execute the code. We pick the winner and repeat the process. It’s like breeding dogs, only less messy.

With modern computing power, we can generate thousands of generations in very short order. We could almost certainly solve the problem. Additionally, we might generate some very novel solutions. The random variation might lead us down paths that we never would have imagined on our own.

While I suspect we’ll make evolutionary software before long, it does seem a bit exotic. Are there ways we could apply evolutionary thinking to solve more practical, day-to-day problems?

Sometimes I think it’s as simple as asking the question. Too often we make yes/no, either/or decisions – whether-or-not decisions as Chip Heath calls them. But we can always ask the question, is there an evolutionary way of looking at the problem? We might find that there are multiple sub-decisions we could make along the way to the big decision. We can decide smaller issues, test the results, and repeat the process. Each time we do, we create a new generation.

A “generation” in this sense might be a set of market trials, a series of studies, or surveys, or focus groups, or trial balloons. We can find many ways to identify and/or validate market needs. But first we have to ask the question. So the next time you participate in a big decision – especially a big risky decision – be sure to ask yourself, could an evolutionary approach help us here? The answer may be no, but don’t close the door too soon.

Gray Hair and Innovation

I’m just peaking.

How old are people when they’re at their innovative peak? I worked in the computing industry and we generally agreed that the most innovative contributors were under 30. Indeed, sometimes, they were quite a bit under 30.

Some of this is simply not knowing what can’t be done. I’ve seen this with Elliot. He doesn’t know how a computer is “supposed” to work. So he just tries things … and very often they work. On the other hand, I do know how a computer is supposed to work and I sometimes don’t try things because I “know” they won’t work. Elliot just doesn’t have the same limits on his thinking. That can be a great advantage in a new field.

While youth may be an advantage in software, it’s not true in many other fields. In pharmaceuticals, for instance, the most innovative people are in their 50s or even 60s. It takes that long to master the knowledge of biology, chemistry, and statistics needed to make original contributions. Comparatively speaking, it’s easy to master software.

Indeed, as knowledge gets more complicated, it takes longer to master. According to Benjamin F. Jones of the Kellogg School of Business, “The mean age at great achievement for both Nobel Prize winners and great technological inventors rose by about 6 years over the course of the 20th Century.” The average Nobel prize winner now conducts his or her breakthrough research around the age of 38 – though the prize is typically awarded many years later.

Aside from domain knowledge, why might you want a little gray hair to fuel innovation in your company? According to a recent article by Tom Agan in the New York Times, one reason is the time necessary to commercialize an innovation. As a general rule, the more fundamental an innovation, the longer it takes to commercialize. Ideas need to percolate. People need to be educated. Back-of-the-envelope sketches need to be prototyped. Lab results need to be scaled up. It takes time – perhaps as much as 20 to 30 years.

Who’s best at converting the idea to reality? Typically, it’s the person or persons who created the innovation in the first place. So, let’s say someone makes a breakthrough at the Nobel-average age of 38. You may need to keep them around until age 58 to proselytize, educate, socialize, realize, and monetize the idea. In the meantime, it’s likely that they will also enhance the idea and, just possibly, kick off a new round of innovation.

So, what to do? Once again, diversity pays. Mixing employees of multiple age groups can help stimulate new ways of thinking and better ways of communicating. Ultimately, I like Meredith Fineman’s advice: “Working hard, disruption, and the entrepreneurial spirit knows no age. To judge based upon it would be juvenile.”

Pour Me a House. Print Me a Cookie.

Print this!

When Elliot was in architecture school, he designed a chair in 3D software. Then he printed it. Then he sat in it. It held up pretty well.

As a designer, Elliot was an early adopter of 3D printing, also known as additive manufacturing. Elliot designs an object in 3D virtual space within a computer. (He’s an expert at this). The object exists as a set of mathematics, describing lines, arcs, curves, shapes, and so on.

Elliot then exports the mathematical description of the object to a 3D printer. The printer converts the math into hundreds of very thin layers – essentially 2D slices. The printer head zips back and forth, laying down a slice with each pass to build the product physically. It’s called “additive manufacturing” because the printer adds a new layer with each pass.

The earliest such printers might have been called “subtractive manufacturing.” You started with a big block of wax in the “printer”. You then loaded the mathematical description and the printer carved away the unnecessary wax using very precise cutting blades. The result was the object modeled in wax. You used the model to build a mold for manufacturing.

Elliot used a printer equipped with a laser and some very special powder. Based on the mathematical description of the slices, the laser moved back and forth, firing at appropriate points to build each layer. On each pass, the laser converted the powder into a very strong, very hard resin that adhered to the previous layer. At the end, Elliot had the finished product, not just a mold.

Elliot’s chair looked and felt like it was made of plastic. Several companies are now experimenting with metal oxides that use a similar process to print metal objects. A British company, Metalysys, is working with a titanium oxide that should allow you to print titanium objects. One benefit: it should dramatically reduce the cost of titanium parts and products.

Newer 3D printers can use a nozzle to extrude material onto each slice. What can you extrude? Well, cement, for instance. Construction companies in Europe are already using robotic arms and cement extruders to build complex walls and structures. It won’t be long before Elliot can design an entire house in virtual space and then have it poured on site. Elliot will be able to create much more imaginative designs (like the one above) and print them at a lower cost than traditional building techniques. What a great time to be an architect!

Not interested in cement? How about extruding some cookie dough instead? In fact, let’s imagine that you have some special dietary needs and restrictions. You submit your dietary data to the printer, which selects a mix of ingredients that meets your needs, and prints you a cookie. You can select ingredients based on your tastes as well as your dietary needs. What a great time to be a chef!

What’s next? GE recently announced that it would use additive manufacturing to create jet engine parts. Before long, we may be able to print new body parts. (I’m waiting for a new brain). And, 3D printing is coming to your home. Click here to find out how you can make anything. What a great time to be a nerd!

Sustainability and Innovation

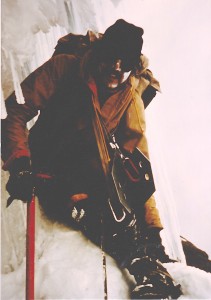

Nice axe!

When I lived in Ecuador, I climbed many of the highest peaks in the Andes. I carried an ice axe with a carbon steel blade and a shaft made of laminated bamboo. Why bamboo? Because it was very light and very, very strong. Little did I know, I was also using one of the most sustainable products in the world.

Who uses bamboo today? Dell Computer now creates packaging out of bamboo fibers rather than cardboard. Why? Partially because it’s very light and very strong. But mainly because it’s one of the fastest growing, least resource intensive fibers in the world. As with my ice axe, it’s highly sustainable.

Dell’s packaging is a small example of a wave of innovation that’s sweeping the manufacturing world. Companies realize that sustainability is increasingly important to their own survivability. It can also be an important competitive advantage within significant customer segments. Innovating for sustainability can deliver three significant benefits. First, it can reduce costs. Second, it can lead a company into new market segments. Third, those market segments are often willing to pay a premium for sustainable goods, which can mean higher margins.

According to a joint MIT and Boston Consulting Group study, interest in sustainability is growing partially because profits are growing. MIT/BCG have published the study yearly since 2010, when they first identified Sustainability Embracers “who firmly believe that sustainability is necessary to be competitive.” In 2010, 23% of the Embracers were already reporting profits from their sustainability innovations. By 2012, that number had risen to 37%.

To reduce costs, companies are increasingly asking their suppliers to reduce waste and energy use and simplify packaging. Customers — especially in Europe — are demanding sustainability “credentials”. Employees are also pressuring their employers to innovate for sustainability. Ultimately, sustainability may become a differentiator in efforts to recruit top talent.

Companies are also selling sustainability. According to the study, SAP, the huge business-to-business software company now states that its purpose is sustainability. Peter Graf, SAP’s chief sustainability officer, says, “That is why we have started to … help clients optimize their energy requirements and natural resource use across their supply chains.” Helping customers implement Green Manufacturing has to be one of the biggest B2B software opportunities over the next decade.

Dell’s example is one of resource innovation — swapping a less sustainable component (cardboard) for a more sustainable one (bamboo). Many companies are also innovating their business models to achieve greater sustainability and greater benefits from sustainability. The innovations tend to come either in value chain improvements or in market segmentation. Companies that “pull these two levers” are more likely to see profits from their sustainability efforts.

There are still obstacles of course. Companies cite various hurdles: it’s difficult to quantify the benefits, sustainability conflicts with other priorities, it increases administrative costs, and, in some cases, it may increase overall production costs. Still, a growing segment of companies is investing in sustainability. Perhaps the best predictor of success is whether a company has written a formal business case for sustainability. Those that have tend to be the innovation leaders. They are also more likely to report that their sustainability investments are generating profits.

Interestingly, North American companies are not leading this innovation wave. Though Europe is ahead of America, the real leaders are companies in developing countries, especially in Africa. The MIT/BCG study suggest that this may well be “because these regions face significant resource scarcity and population growth challenges.” This may also be an example of “reverse innovation” where innovations in poorer countries are adapted by richer countries rather than vice-versa.

Platforms, Solutions, and Aggies

Typical land-grant graduate.

My father, who was the first in our family to go to college, went to a land-grant university (Texas A&M). My sister went to a land-grant university (Clemson). I went to a land-grant university (Delaware). My wife went to a land-grant university (Purdue). My wife’s parents went to a land-grant university. (Wisconsin)

Abraham Lincoln set up the land-grant system through the Morrill Act of 1862. The federal government granted land to each state. The state used the land to set up a college to teach the practical arts, including agriculture, engineering, and military science.

The system worked. Land-grant colleges became social elevators that allowed lower-and middle-class kids to pursue higher education affordably. They also became engines of innovation, fueling an innovation boom that catapulted the United Sates to leadership positions in multiple industries in the late 19th century. We’re still riding the echo of that boom. I’ve often wondered about the return on the land-grant investment. The economic value created by the system must be orders of magnitude higher than the original cost.

The genius of the system is that it’s a platform, not a solution. For instance, Lincoln didn’t identify the inefficient harvesting of cotton as a national problem and jump to the conclusion that the government should invest in the cotton gin. Instead, he created a platform that allowed many people to pursue an education, investigate problems, and develop solutions on their own.

I bring this up because we seem confused about what role the government should play in stimulating innovation. I hear it in my IT/innovation classes all the time. Some students argue that government should get out of the way and let private industry solve every problem “efficiently”. Others argue that government should have a role but they have a difficult time describing it.

Ultimately, I think it’s fairly simple. The government should invest in platforms, not solutions. The land-grant system allowed millions of people — including me — to take something from America and then turn around and make something for America. (It’s not true that we’re either makers or takers. We’re usually both.)

In the recent past, the best example of platforms that stimulate innovation are probably the Internet and the human genome project. The massive brain mapping project — the Human Connectome — that President Obama recently announced could become the next great platform. On the other hand, the government investment in the solar panel manufacturer, Solyndra, was solution picking rather than platform building. It didn’t work so well.

So, I’m all for government investment in platforms that can stimulate innovation. By the way, I don’t claim that this is an original idea of mine. Steven Johnson makes much the same point in his book, Where Good Ideas Come From. But I do think it’s an idea that needs to be popularized. That’s why I’m writing about it. I hope you will, too. In the meantime, I’ll give credit where it’s due by saying, “Thank you Mr. Lincoln for helping my family get an education.”