Delayed Intuition – How To Hire Better

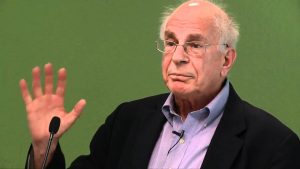

Daniel Kahneman is rightly respected for discovering and documenting any number of irrational human behaviors. Prospect theory – developed by Kahneman and his colleague, Amos Tversky – has led to profound new insights in how we think, behave, and spend our money. Indeed, there’s a straight line from Kahneman and Tversky to the new discipline called Behavioral Economics.

In my humble opinion, however, one of Kahneman’s innovations has been overlooked. The innovation doesn’t have an agreed-upon name so I’m proposing that we call it the Kahneman Interview Technique or KIT.

The idea behind KIT is fairly simple. We all know about the confirmation bias – the tendency to attend to information that confirms what we already believe and to ignore information that doesn’t. Kahneman’s insight is that confirmation bias distorts job interviews.

Here’s how it works. When we meet a candidate for a job, we immediately form an impression. The distortion occurs because this first impression colors the rest of the interview. Our intuition might tell us, for instance, that the candidate is action-oriented. For the rest of the interview, we attend to clues that confirm this intuition and ignore those that don’t. Ultimately, we base our evaluation on our initial impressions and intuition, which may be sketchy at best. The result – as Google found – is that there is no relationship between an interviewer’s evaluation and a candidate’s actual performance.

To remove the distortion of our confirmation bias, KIT asks us to delay our intuition. How can we delay intuition? By focusing first on facts and figures. For any job, there are prerequisites for success that we can measure by asking factual questions. For instance, a salesperson might need to be: 1) well spoken; 2) observant; 3) technically proficient, and so on. An executive might need to be: 1) a critical thinker; 2) a good strategist; 3) a good talent finder, etc.

Before the interview, we prepare factual questions that probe these prerequisites. We begin the interview with facts and develop a score for each prerequisite – typically on a simple scale like 1 – 5. The idea is not to record what the interviewer thinks but rather to record what the candidate has actually done. This portion of the interview is based on facts, not perceptions.

Once we have a score for each dimension, we can take the interview in more qualitative directions. We can ask broader questions about the candidate’s worldview and philosophy. We can invite our intuition to enter the process. At the end of the process, Kahneman suggests that the interviewer close her eyes, reflect for a moment, and answer the question, How well would this candidate do in this particular job?

Kahneman and other researchers have found that the factual scores are much better predictors of success than traditional interviews. Interestingly, the concluding global evaluation is also a strong predictor, especially when compared with “first impression” predictions. In other words, delayed intuition is better at predicting job success than immediate intuition. It’s a good idea to keep in mind the next time you hire someone.

I first learned about the Kahneman Interview Technique several years ago when I read Kahneman’s book, Thinking Fast And Slow. But the book is filled with so many good ideas that I forgot about the interviews. I was reminded of them recently when I listened to the 100th episode of the podcast, Hidden Brain, which features an interview with Kahneman. This article draws on both sources.

Skeptical Spectacles and the Saintliness Rule

Manti Te’o is a linebacker for Notre Dame and widely regarded as one of the best players in college football. During the past season, a story emerged that his girlfriend had leukemia and lingered near death. She died just before a big Notre Dame game. But Te’o was loyal to his teammates and played through his heartbreak to help Notre Dame win the game and go undefeated in the regular season.

It’s a great story. Unfortunately, it’s not true. The girlfriend never existed. The blogosphere has been obsessing over whether Te’o is the perpetrator or the victim of the hoax. I have a different question: why did we believe the story in the first place?

I think we were fooled by Te’o for the same reasons we were fooled by Lance Armstrong, Greg Mortenson, and Bernie Madoff. We were active participants in the deception. We wanted to believe their stories. I’m an avid cyclist and I certainly wanted to believe Armstrong’s story. What a great story it was. It gave us faith in our human ability to overcome great obstacles. So I fell prey to confirmation bias. Consciously and subconsciously, I attended to evidence that confirmed my beliefs. I ignored evidence that contradicted them. When Armstrong finally came clean, I felt he cheated me. I also realized I cheated myself. I had a double dose of regret.

Of course, there are people whose marvelous stories don’t need embellishment. Mother Teresa certainly comes to mind. She’s already beatified and seems well on her way to sainthood. Nelson Mandela was probably politically expedient from time to time but, by and large, the legend fits the man. Jackie Robinson wasn’t a perfect man but he really did do what he was famous for. (Hmm … why am I having difficulty identifying a contemporary white male to put in this category?)

So, how do we distinguish between those who claim to be saintly and those who actually are? Here’s my proposed Saintliness Rule. When a story makes someone sound saintly, put on your skeptical spectacles. Use your filters that help you suspend belief (as opposed to disbelief). Be patient, review the evidence, be doubtful. Be skeptical but not cynical. After all, there really are some saints out there.