Effect and Cause

Is it clean yet?

I worry about cause and effect. If you get them backwards, you wind up chasing your tail. While you’re at it, you can create all kinds of havoc.

Take MS (please). We have long thought of multiple sclerosis as an autoimmune disease. The immune system interprets myelin – the fatty sheath around our nerves – as a threat and attacks it. As it eats away the myelin, it also impairs our ability to send signals from our brain to our limbs. The end result is often spasticity or even paralysis.

We don’t know the cause but the effect is clearly the malfunctioning immune system. Or maybe not. Some recent research suggests that a bacterium may be involved. It may be that the immune system is reacting appropriately to an infection. The myelin is simply an innocent bystander, collateral damage in the antibacterial attack.

The bacterium involved is a weird little thing. It’s difficult to spot. But it’s especially difficult to spot if you’re not looking for it. We may have gotten cause and effect reversed and been looking for a cure in all the wrong places. If so, it’s a failure of imagination as much as a failure of research. (Note that the bacterial findings are very preliminary, so let’s continue to keep our imaginations open).

Here’s another example: obsessive compulsive disorder. In a recent article, Claire Gillan argues that we may have gotten cause and effect reversed. She summarizes her thesis in two simple sentences: “Everybody knows that thoughts cause actions which cause habits. What if this is the wrong way round?”

As Gillan notes, we’ve always assumed that OCD behaviors were the effect. It seemed obvious that the cause was irrational thinking and, especially, fear. We’re afraid of germs and, therefore, we wash our hands obsessively. We’re afraid of breaking our mother’s back and, therefore, we avoid cracks in the sidewalk. Sometimes our fears are rooted in reality. At other times, they’re completely delusional. Whether real or delusional, however, we’ve always assumed that our fears caused our behavior, not the other way round.

In her research on OCD behavior, Gillan has made some surprising discoveries. When she induced new habits in volunteers, she found that people with OCD change their beliefs to explain the new habit. In other words, behavior is the cause and belief is the effect.

Traditional therapies for OCD have sought to address the fear. They aimed to change the way people with OCD think. But perhaps traditional therapists need to change their own thinking. Perhaps by changing the behaviors of people with OCD, their thinking would (fairly naturally) change on its own.

This is, of course, quite similar to the idea of confabulation. With confabulation, we make up stories to explain the world around us. It gives us a sense of control. With OCD – if Gillan is right – we make up stories to explain our own behavior. This, too, gives us a sense of control.

Now, if we could just get cause and effect straight, perhaps we really would have some control.

Survivorship Bias

Protect the engines.

Are humans fundamentally biased in our thinking? Sure, we are. In fact, I’ve written about the 17 biases that consistently crop up in our thinking. (See here, here, here, and here). We’re biased because we follow rules of thumb (known as heuristics) that are right most of the time. But when they’re wrong, they’re wrong in consistent ways. It helps to be aware of our biases so we can correct for them.

I thought my list of 17 provided a complete accounting of our biases. But I was wrong. In fact, I was biased. I wanted a complete list so I jumped to the conclusion that my list was complete. I made a subtle mistake and assumed that I didn’t need to search any further. But, in fact, I should have continued my search.

The latest example I’ve discovered is called the survivorship bias. Though it’s new to me, it’s old hat to mathematicians. In fact, the example I’ll use is drawn from a nifty new book, How Not to Be Wrong: The Power of Mathematical Thinking by Jordan Ellenberg.

Ellenberg describes the problem of protecting military aircraft during World War II. If you add too much armor to a plane, it becomes a heavy, slow target. If you don’t add enough armor, even a minor scrape can destroy it. So what’s the right balance?

American military officers gathered data from aircraft as they returned from their missions. They wanted to know where the bullet holes were. They reasoned that they should place more armor in those areas where bullets were most likely to strike.

The officers measured bullet holes per square foot. Here’s what they found:

Engine 1.11 bullet holes per square foot

Fuel System 1.55

Fuselage 1.73

Rest of plane 1.8

Based on these data, it seems obvious that the fuselage is the weak point that needs to be reinforced. Fortunately, they took the data to the Statistical Research Group, a stellar collection of mathematicians organized in Manhattan specifically to study problems like these.

The SRG’s recommendation was simple: put more armor on the engines. Their recommendation was counter-intuitive to say the least. But here’s the general thrust of how they got there:

- In the confusion of air combat, bullets should strike almost randomly. Bullet holes should be more-or-less evenly distributed. The data show that the bullet holes are not evenly distributed. This is suspicious.

- The data were collected from aircraft that returned from their missions – the survivors. What if we included the non-survivors as well?

- There are fewer bullet holes on engines than one would expect. There are two possible explanations: 1) Bullets don’t strike engines for some unexplained reason, or; 2) Bullets that strike engines tend to destroy the airplane – they don’t return and are not included in the sample.

Clearly, the second explanation is more plausible. Conclusion: the engine is the weak point and needs more protection. The Army followed this recommendation and probably saved thousands of airmen’s lives.

It’s a colorful example but may seem distant form our everyday experiences. So, here’s another example from Ellenberg’s book. Let’s say we want to study the ten-year performance of a class of mutual funds. So, we select data from all the mutual funds in the category from 2004 as the starting point. Then we collect similar data from 2014 as the end point. We calculate the percentage growth and reach some conclusions. Perhaps we conclude that this is a good investment category.

What’s the error in our logic? We’ve left out the non-survivors – funds that existed in 2004 but shut down before 2014. If we include them, overall performance scores may decline significantly. Perhaps it’s not such a good investment after all.

What’s the lesson here? Don’t jump to conclusions. If you want to survive, remember to include the non-survivors.

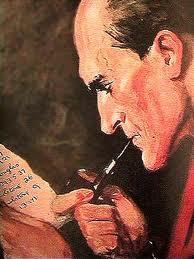

Don’t Beat Yourself Up (Too Much)

Compared to this guy, I’m a great driver.

I’m a pretty good driver. How do I know? I can observe other drivers and compare their skills to mine. I see them making silly mistakes. I (usually) avoid those mistakes myself. QED: I must be a better-than-average driver. I’d like to stay that way and that motivates me to practice my driving skills.

Using observation and comparison, I can also conclude that I’m not a very good basketball player. I can observe what other players do and compare their skills to mine. They’re better than I am. That may give me the motivation to practice my hoops skills.

Using observation and comparison I can conclude that I’m better at driving the highway than at driving the lane. But how do I know if I’m a good thinker or not? I can’t observe other people thinking. Indeed, according to many neuroscientists, I can’t even observe myself thinking. System 1 thinking happens below the level of conscious awareness. So I can’t observe and compare.

Perhaps I could compare the results of thinking rather than thinking itself. People who are good thinkers should be more successful than those who aren’t, right? Well, maybe not. People might be successful because they’re lucky or charismatic, or because they were born to the right parents in the right place. I’m sure that we can all think of successful people who aren’t very good thinkers.

So, how do we know if we’re good thinkers or not? Well, most often we don’t. And, because we can’t observe and compare, we may not have the motivation to improve our thinking skills. Indeed, we may not realize that we can improve our thinking.

I see this among the students in my critical thinking class. Students will have varying opinions about their own thinking skills. But most of them have not thought about their thinking and how to improve it.

Some of my students seem to think they’re below average thinkers. In their papers, they write about the mistakes they’ve made and how they berate themselves for poor thinking. They can’t observe other people making the same mistake so they assume that they’re the only ones. Actually, the mistakes seem fairly commonplace to me and I write a lot of comments along these lines, “Don’t beat yourself over this. Everybody make this mistake.”

Some of my students, of course, think they’re above average thinkers. Some (though not many) think they’re about average. But I think the single largest group – maybe not a majority but certainly a plurality – think they’re below average.

I realized recently that the course aims to build student confidence (and motivation) by making thinking visible. When we can see how people think, then we can observe and compare. So we look at thinking processes and catalog the common mistakes people make. As we discuss these patterns, I often hear students say, “Oh, I thought I was the only one to do that.”

In general, students get the hang of it pretty quickly. Once they can observe external patterns and processes, they’re very perceptive about their own thinking. Once they can make comparisons, they seem highly motivated to practice the arts of critical thinking. It’s like driving or basketball – all it takes is practice.

Eighteen Debacles

Debacles happen.

We have two old sayings that directly contradict each other. On the one hand, we say, “Look before you leap.” On the other hand, “He who hesitates is lost.” So which is it?

I wasn’t thinking about this conundrum when I assigned the debacles paper in my critical thinking class. Even so, I got a pretty good answer.

The critical thinking class has two fundamental streams. First, we study how we think and make decisions as individuals, including all the ways we trick ourselves. Second, we study how we think and make decisions as organizations, including all the ways we trick each other.

For organizational decision making, students write a paper analyzing a debacle. For our purposes, a debacle is defined by Paul Nutt in his book Why Decisions Fail: “… a decision riddled with poor practices producing big losses that becomes public.” I ask students to choose a debacle, use Nutt’s framework to analyze the mistakes made, and propose how the debacle might have been prevented.

Students can choose “public” debacles as reported in the press or debacles that they have personally observed in their work. In general, students split about half and half. Popular public debacles include Boston’s Big Dig, the University of California’s logo fiasco, Lululemon’s see-through pants, the Netflix rebranding effort, JC Penney’s makeover, and the Gap logo meltdown. (What is it with logos?)

This quarter, students analyzed 18 different debacles. As I read the papers, I kept track of the different problems the students identified and how frequently they occurred. I was looking specifically for the “blunders, traps, and failure-prone practices” that Nutt identifies in his book.

Five of Nutt’s issues were reported in 50% or more of the papers. Here’s how they cropped up along with Nutt’s definition of each.

Premature commitment – identified in 13 papers or 72.2% of the sample. Nutt writes that “Decision makers often jump on the first idea that comes along and then spend years trying to make it work. …When answers are not readily available grabbing onto the first thing that seems to offer relief is a natural impulse.” (I’ve also written about this here).

Ambiguous direction – 11 papers or 61.1%. Nutt writes, “Direction indicates a decision’s expected result. In the debacles [that Nutt studied], directions were either misleading, assumed but never agreed to, or unknown.”

Limited search, no innovation – ten papers or 55.5%. According to Nutt, “The first seemingly workable idea … [gets] adopted. Having an ‘answer’ eliminates ambiguity about what to do but stops others from looking for ideas that could be better.”

Failure to address key stakeholders claims – ten papers or 55.5%. Stakeholders make claims based on opportunities or problems. The claims may be legitimate or they may be politically motivated. They may be accurate or inaccurate. Decision makers need to understand the claims as thoroughly as possible. Failure to do so can alienate the stakeholders and produce greater contention in the process.

Issuing edicts – nine papers or 50%. Nutt: “Using an edict to implement … is high risk and prone to failure. People who have no interest in the decision resist it because they do not like being forced and they worry about the precedent that yielding to force sets.”

As you make your management decisions, keep these Big Five in mind. They occur regularly, they’re inter-related, and they seem to cut deeply. The biggest issue is premature commitment. If you jump on an idea before its time, you’re more likely to fail than succeed. So, perhaps we’ve shown that look before you leap is better wisdom than he who hesitates is lost.

Seeing And Observing Sherlock

Pardon me while I unitask.

I’m reading a delightful book by Maria Konnikova, titled Mastermind: How To Think Like Sherlock Holmes. It covers much of the same territory as other books I’ve read on thinking, deducing, and questioning but it reads more like … well, like a detective novel. In other words, it’s fun.

In the past, I’ve covered Daniel Kahneman’s book, Thinking Fast and Slow. Kahneman argues that we have two thinking systems. System 1 is fast and automatic and always on. We make millions of decisions each day but don’t think about the vast majority of them; System 1 handles them. System 1 is right most of the time but not always. It uses rules of thumb and makes common errors (which I’ve cataloged here, here, here, and here).

System 1 can also invoke System 2 – the system we think of when we think of thinking. System 2 is where we logically process data, make deductions, and reach conclusions. It’s very energy intensive. Thinking is tiring, which is why we often try to avoid it. Better to let System 1 handle it without much conscious thought.

Kahneman illustrates the differences between System 1 and System 2. Konnikova covers some of the same territory but with slightly different terminology. Konnikova renames System 1 as System Watson and System 2 as System Holmes. Konnikova proceeds to analyze System Holmes and reveal what makes it so effective.

Though I’m only a quarter of the way through the book, I’ve already gleaned a few interesting tidbits, such as these:

Motivation counts – motivated thinkers are more likely to invoke System Holmes. Less motivated thinkers are willing to let System Watson carry the day. Konnikova points out that thinking is hard work. (Kahneman makes the same point repeatedly). Motivation helps you tackle the work.

Unitasking trumps multitasking – Thinking is hard work. Thinking about multiple things simultaneously is extremely hard work. Indeed, it’s virtually impossible. Konnikova notes that Holmes is very good at one essential skill: sitting still. (Pascal once remarked that, “All of man’s problems stem from his inability to sit still in a room.” Holmes seems to have solved that problem).

Your brain attic needs a spring cleaning – we all have lots of stuff in our brain attics and – like the attics in our houses – a lot of it is not worth keeping. Holmes keeps only what he needs to do the job that motivates him.

Observing is different than seeing – Watson sees. Holmes observes. Exactly how he observes is a complex process that I’ll report on in future posts.

Don’t worry. I’m on the case.