Will Social Media Make You Crazy?

This person has been edited.

Social media has been getting a bad rap in the press recently. First, there’s The Innovation of Loneliness, a terrific short film (just over four minutes) by Shimi Cohen. It suggests that one of the key differences between real life and social-media life is the ability to edit. We can edit ourselves on social media and put our best foot forward. Not so in real life and, according to Cohen, that’s what makes real-life superior. Simply put: you interact with real people, not edited people.

Cohen — whose film is based on Sherry Turkle’s book Alone Together – urges us to slow down and have actual conversations with others. He also explains (though never names) the concept of Dunbar’s Number – that humans naturally organize themselves into groups of 150 or less. He implies that any group larger than 150 is too much for the human mind to handle. So what’s the point of having, say, 500 friends on Facebook?

Cohen’s film reminded me of the many articles I’ve read on mindfulness recently. For instance, Scientific American Mind recently led with mindfulness as its cover story. I’ve also been reading Mindfulness for Beginners by Jon Kabat-Zinn. The common thread is the admonition to slow down and, as Kabat-Zinn phrases it, “reclaim the present moment.” These writings don’t specifically suggest that social media is bad for you but it’s an easy conclusion to draw.

A research article recently published in the Public Library of Science (PLoS) is much more specific. In fact, its title pretty much says it all: “Facebook Use Predicts Declines in Subjective Well-Being Among Young Adults”. (The original research paper is here. For non-technical summaries, click here for an article from The Economist or here for one from the L.A. Times).

The study tracked 82 young people as they used Facebook. They were also asked to report their “satisfaction with life” at the beginning and end of the study. Bottom line: “…the more they used Facebook [during the study period], the more their life satisfaction levels declined over time.”

The PLoS study doesn’t identify the causes in decline of life satisfaction. But The Economist points to an earlier study to identify a likely culprit. The study, conducted in Germany, found that “…the most common emotion aroused by using Facebook is envy”. The Economist makes essentially the same point that Shimi Cohen does:

Endlessly comparing themselves with peers who have doctored their photographs, amplified their achievements and plagiarised their bons mots can leave Facebook’s users more than a little green-eyed. Real-life encounters, by contrast, are more WYSIWYG (what you see is what you get).

So should we drop social media? As I wrote several months ago, I still think it’s a good way to stay in touch with our “Christmas card friends” – those friends whom we like but don’t see very often. For our bosom buddies, on the other hand, it’s probably better to just slow down and have a nice, mindful heart-to-heart conversation.

Brain Porn

This is your brain on brain porn.

I like to think about our brains. The way the brain functions influences our creativity and communication. These influence our ability to innovate. Innovation influences our business success. It’s all linked together in one continuum, though teasing out how the links actually work is exceedingly difficult.

Since I’m not a neuroscientist by training, I read science-for-the-layperson materials. I enjoy Oliver Sacks, Daniel Kahneman, Peter Facione, Christopher Chabris, Daniel Simons, James Surowiecki, Steven Pinker, and James Gleick to name a few. But I often wonder just how much of what I read is accurate. Is some of it dumbed down for the non-scientist? What can we trust and what should we be suspicious of?

It turns out that there’s a lot of “brain porn” out there. Also known as “folk neuroscience”, this stuff oversimplifies and gives a false sense of certitude. It seems so clear, for instance, that a man who is short on oxytocin will have a rocky romantic life. After all, oxytocin is the “love hormone”. If you don’t have enough, how good a lover can you be?

We humans love to make up stories to explain cause and effect. Some of our stories are even true. In many cases, however, we don’t really know what causes what. It’s complicated. Still, we have a deep-seated need to create backstories that explain why we are the way we are. Neuroscience fits our need perfectly; it appears to give ultimate explanations. We behave a certain way because we’re “hardwired” to do so. We have an imbalance in our brain chemistry and therefore we behave antisocially (or immaturely or irrationally or generously, etc.)

Our desire for an explanation is also a desire for a cure. If an imbalance in our brain chemistry causes antisocial behavior, then all we have to do is learn how to rebalance our brain chemistry. As in A Clockwork Orange, we might turn ultraviolent criminals into well-behaved citizens. All we need to do is understand the brain better and we can make ourselves “better”. A fundamental question is: who gets to define “better”?

So how wrong is brain porn? Vaughan Bell wrote an excellent article in The Guardian that itemizes some basic misunderstandings. For instance, it’s not true that the left-brain is rational and the right-brain creative. We really do need both sides of our brains if we want to be either rational or creative. Similarly, video games don’t “rewire” our brains into some permanently demented state. The brain is constantly changing. Video games may contribute some new connections but so does everything else we do. We can’t get stuck in video game dementia any more than our eyes can get stuck when we cross them.

Despite the brain porn out there, we can still learn a lot about behavior and creativity from neuroscience. In the next few days, for instance, I’ll review an article titled “Your Brain At Work” that really can teach us how to be more effective leaders and managers. I’ll do my best to write about neuroscience that’s well documented and substantiated. I believe there’s a lot of wheat out there. We just need to separate it from the chaff.

False Smile, Real Purchase

Is that a real smile?

As we’ve discussed before, your body influences your mood. If you want to improve your mood, all you really have to do is force yourself to smile. It’s hard to stay mad or blue or shiftless when your face is smiling.

I can’t prove this but I think that smiling can also improve your performance on a wide variety of tasks. I suspect that you make better decisions when you’re smiling. I bet you make better golf shots, too.

It’s not just my face that influences my mood. It’s also the faces of those around me. If they’re smiling, it’s harder for me to stay mad. There’s a lot written about the influence of groups on individual behavior.

Retailers seem to understand this intuitively. I occasionally go to jewelry stores to buy something for Suellen. I notice two things: 1) I’m always waited on by a woman; 2) she smiles a lot. I assume that she smiles to influence my mood (positively) to increase the chance of making a sale. It often works.

I understand the reason behind a false smile on another person (and, most often, I can defend against it). But what if the salesperson uses my own smile to influence my mood and propensity to buy?

It could happen soon. As reported in New Scientist, the Emotion Evoking System developed at the University of Tokyo, can manipulate your image so you see a smiling (or frowning) version of yourself. The system takes a webcam image of you and manipulates it to put a smile on your face. It then displays the image to you. It’s like looking in the mirror but the image isn’t a faithful replication.

In preliminary tests, volunteers were divided into two groups and asked to perform mundane tasks. Both groups could see themselves in a webcam image. One group saw a plain image. The other group saw a manipulated image that enhanced their smile. Afterwards, the volunteers in each group were asked to rate their happiness while performing the task. The group that saw the manipulated image reported themselves to be happier.

In theory, such a system could help people who are depressed. It could also be used to sell more. You try on something and see a smiling version of you in the mirror. As they say, buyer beware!

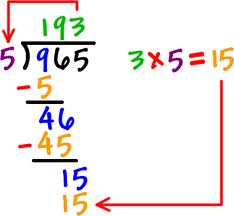

The Beauty of Long Division

Remember long division? You have a big number and want to divide it by a small number. You draw a little house, put the big number in it, and the small number outside it. Then you start guessing. Roughly how many times will the small number go into the large number?

Remember long division? You have a big number and want to divide it by a small number. You draw a little house, put the big number in it, and the small number outside it. Then you start guessing. Roughly how many times will the small number go into the large number?

You’ll be wrong the first time but it doesn’t matter. You start refining. If your first guess is close, you can refine it in a few steps. If your first guess is way off, you’ll need to take more refining steps. Either way, the method works. In fact, it’s pretty much foolproof. Even fourth graders can do it.

I got this example from an elegant little book I’m reading called Intuition Pumps and Other Tools for Thinking by the philosopher, Daniel Dennett. The general idea is that thinkers, like blacksmiths, have to make their own tools. We’ve used some tools for millennia; others are of more recent vintage.

As long division illustrates, one tool is approximation. (Technically, it’s known as heuristics). In the real world, we don’t always have to be precise. We start with a guess and then refine it. The important thing is to make the guess. That’s the ante for getting into the game.

It’s surprising how often this works. In fact, now that I’m thinking about it, I realize that I make guesses all the time. Guessing is certainly a good management tool. In the hurly burly of commerce, it’s not always clear precisely what’s happening. It usually takes accountants four to six weeks to figure out precisely what happened in a given quarter. In the meantime, it’s useful for day-to-day managers to make educated guesses. Learning to make such guesses is a critical skill.

I realized that I also apply this to my writing. I sometimes get writer’s block. I know the argument I want to make but I don’t know how to frame it. I can’t get started. When that happens, I make an effort to just write something, even if it’s sloppy and poorly phrased. Once I have something written down, I can then shift gears. I’m no longer writing; I’m editing. Somehow, that seems much easier.

As you think about thinking, think about guessing. In many cases, you’re more likely to get to a clear thought through approximation than through a brilliant flash of insight.

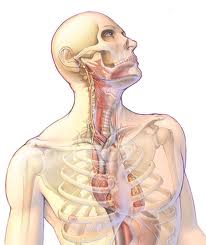

Thinking and Health

Does the mind influence the body or vice-versa? It seems that it happens both ways and the vagus nerve plays a key role in keeping you both happy and healthy (and creative).

Does the mind influence the body or vice-versa? It seems that it happens both ways and the vagus nerve plays a key role in keeping you both happy and healthy (and creative).

Also known as the tenth cranial nerve, the vagus nerve links the brain with the lungs, digestive system, and heart. Among many other things, the vagus nerve sends the signals that help you calm down after you’ve been startled or frightened. It helps you return to a “normal” state. A healthy vagus nerve promotes resilience — the ability to recover from stress. (The vagus nerve is not in the spinal cord, meaning that people with spinal cord injuries can still sense much of their system).

The vagus also helps control your heartbeat. To use oxygen efficiently, your heart should beat a bit faster when you breathe in and a bit slower when you breathe out. The ratio of your breathing-in heartbeat to your breathing-out heartbeat is known as the vagal tone.

Physiologists have long known that a higher vagal tone is generally associated with better physical and mental health. Most researchers assumed, however, that we can’t improve our vagal tone. Some lucky people have a higher vagal tone; some unlucky people have a lower one. It’s determined by factors beyond our control.

Then Barbara Fredrickson and Bethany Kok decided to see if that was really true. Their research – published in Psychological Science – suggests that we can improve our vagal tone. By doing so, we can create a virtuous circle: improving our mental outlook can improve vagal tone which, in turn, can make it easier to improve our mental outlook. (For two non-technical articles on this research, click here and here).

In their experiment, Fredrickson and Kok randomly divided volunteers into two groups. One group was taught a form of meditation (loving kindness meditation) that engenders “feelings of goodwill”. Both groups were also asked to keep track of their positive and negative emotions.

The results were fairly straightforward: “All the volunteers … showed an increase in positive emotions and feelings of social connectedness – and the more pronounced this effect, the more their vagal tone had increased ….” Additionally, those who meditated improved their vagal tone much more than those who didn’t.

The virtuous circle seems to be associated with social connectedness, which Fredrickson refers to as a “potent wellness behavior”. The loving kindness meditation promotes a sense of social connectedness. That, in turn, improves vagal tone. That, in turn, promotes a sense of social connectedness. Bottom line: it pays to think positive thoughts about yourself and others.